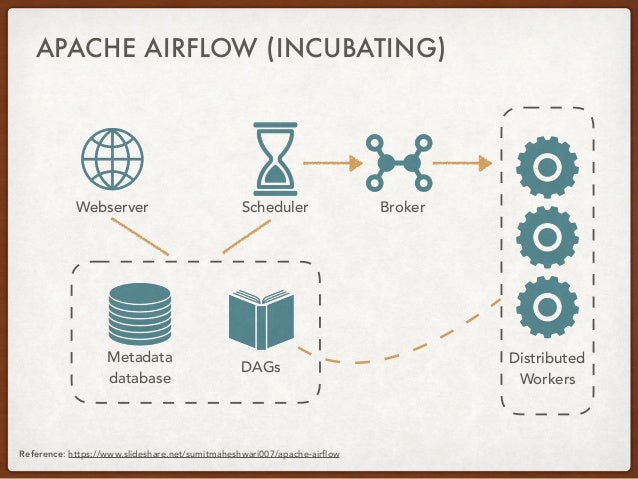

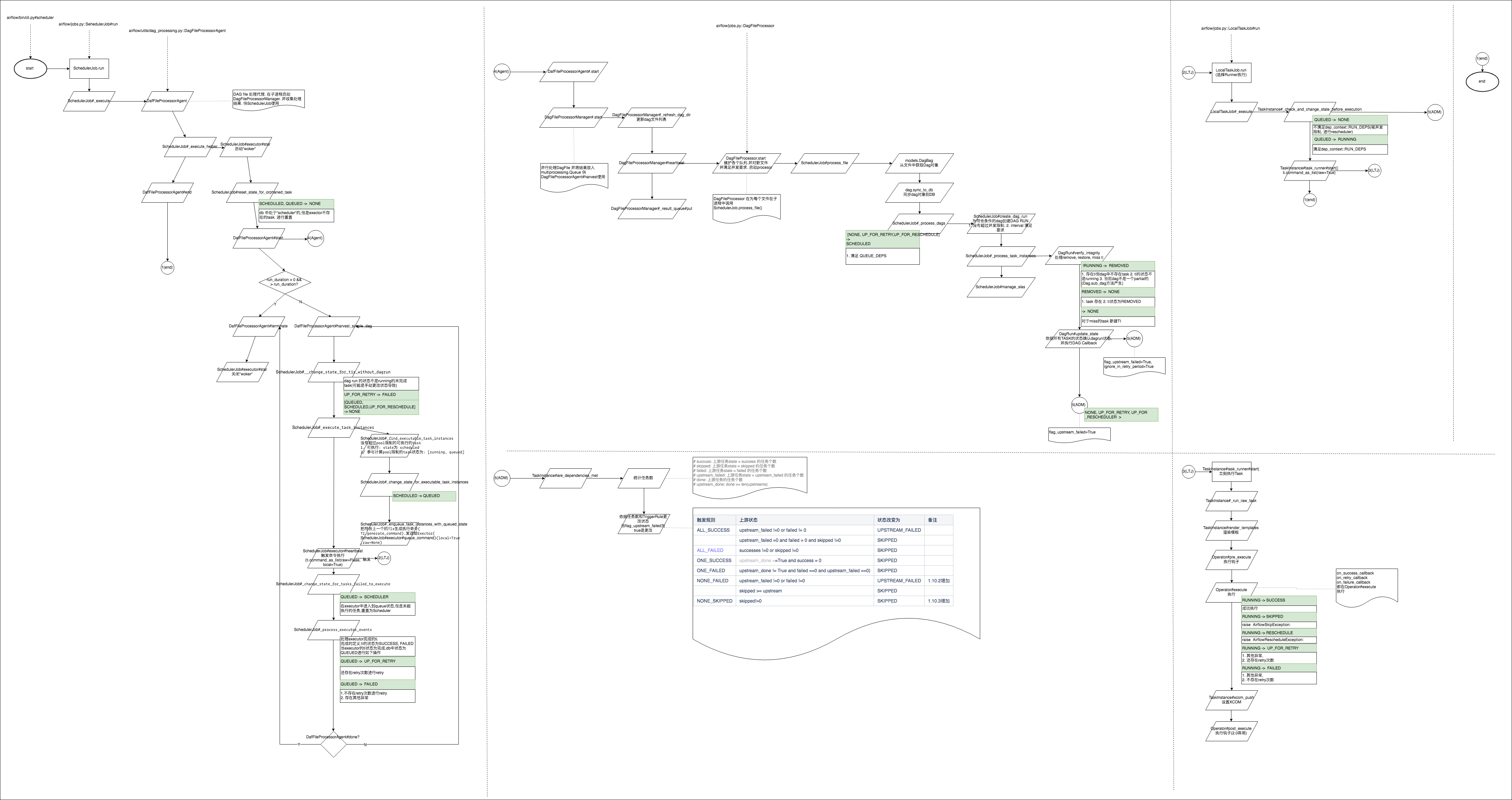

Then I killed the process with kill -11 and loaded the core in gdb, and below is the stack trace I did a strace on the stuck process, it shows the followingįutex(0x14d9390, FUTEX_WAIT_PRIVATE, 0, NULL Whenever the process stuck, it doesn't respond to any other kill signals except 9 & 11. Process 5978: /usr/local/bin/python /usr/local/bin/airflow scheduler -n -1Īll system vitals like the disk, cpu, and mem are absolutely fine whenever the stuck happens for us. _execute_helper (airflow/jobs/scheduler_job.py:1443) _validate_and_run_task_instances (airflow/jobs/scheduler_job.py:1505) Heartbeat (airflow/executors/base_executor.py:134) Sync (airflow/executors/celery_executor.py:247) _repopulate_pool (multiprocessing/pool.py:241) _bootstrap (multiprocessing/process.py:317) _flush_std_streams (multiprocessing/util.py:435) Process 5977: /usr/local/bin/python /usr/local/bin/airflow scheduler -n -1 _execute (airflow/jobs/scheduler_job.py:1382) _execute_helper (airflow/jobs/scheduler_job.py:1415) _init_ (multiprocessing/popen_fork.py:20) _launch (multiprocessing/popen_fork.py:74)

_bootstrap (multiprocessing/process.py:297) _run_processor_manager (airflow/utils/dag_processing.py:624) Start (airflow/utils/dag_processing.py:886) _send_bytes (multiprocessing/connection.py:404) _send (multiprocessing/connection.py:368) Process 18: airflow scheduler - DagFileProcessorManager (regardless of the CPU usage)Ĭollecting samples from 'airflow scheduler - DagFileProcessorManager' (python v3.7.8) The same thing happens even after restarting the scheduler pod. Last heartbeat was received 5 minutes ago The scheduler does not appear to be running. INFO - Resetting orphaned tasks for active dag runs I would be happy to provide any other information needed I am using the -n 3 parameter while starting scheduler. I've tried with both Celery and Local executors but same issue occurs. But again, after running some tasks it gets stuck. The only way I could make it run again is by manually restarting the scheduler service. Looks it goes into some kind of infinite loop. No jobs get submitted and everything comes to a halt.

When this happens, the CPU usage of scheduler service is at 100%. The scheduler gets stuck without a trace or error.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed